A production-grade data pipeline and quantitative backtesting engine for horse racing analytics. This project demonstrates end-to-end work across three problem areas that each carry their own engineering challenges:

- Anti-bot data acquisition — collecting hundreds of thousands of records from a Cloudflare-protected ASP.NET WebForms site using a layered bypass strategy (TLS fingerprint impersonation as the primary path, full headed browser automation as fallback).

- High-throughput parameter search — exhaustive evaluation of millions of strategy configurations on a 12-month dataset using

multiprocessing.Poolacross all available CPU cores. - Honest validation — walk-forward train/test split to distinguish real edges from overfit noise, with reproducible Excel reporting.

The codebase is sanitized for public release: real source URLs are replaced with placeholders, no real scraped data is included, and a synthetic dataset generator is provided so the engine can be run end-to-end with one command.

Most "scraper portfolio projects" you see on GitHub are 50 lines of requests.get() + BeautifulSoup against a static site. This one solves the problem you actually hit in real freelance work: the site is hostile to scraping, the obvious approaches all fail, and the only thing that works is building a layered fallback ladder — TLS impersonation when possible, real-browser automation when not.

Most "backtest portfolio projects" optimize a single strategy and report the in-sample ROI. This one runs an exhaustive grid search over 2.26 million parameter combinations across 15 cores, then runs walk-forward validation on a 9-month train / 3-month test split to check whether the strategies survive on unseen data.

Both pieces are wired together end-to-end: scraper output feeds the engine, engine output is a multi-sheet Excel deliverable.

git clone https://github.com/yourname/racing-scraper-backtest-engine.git

cd racing-scraper-backtest-engine

pip install -r requirements.txt

# Generate synthetic dataset (1000 races, ~10k runners) and run the engine

python run_demo.pyOutput: data/output/demo_results.xlsx — multi-sheet Excel with strategy recommendations, modifier breakdowns by day-of-week / class / track condition, walk-forward validation, and Kelly stake sizing.

The demo runs in about a minute on a modern laptop. The same pipeline against a real 12-month dataset (~210k runners, 190k enriched past starts) takes 5–10 minutes for the grid search on a 16-core box.

┌──────────────────────────────────────┐

│ STAGE 1: API harvest │

│ curl-cffi + Chrome TLS impersonation│

│ → ~210k runner records │

└─────────────┬────────────────────────┘

│ runners.csv

▼

┌──────────────────────────────────────┐

│ STAGE 2: enrichment │

│ Playwright (real Chromium) │

│ bypasses Cloudflare + ASP.NET │

│ → ~190k past starts │

└─────────────┬────────────────────────┘

│ history.csv

▼

┌──────────────────────────────────────┐

│ MERGE & ENRICH │

│ pandas merge on (HorseID,Date,Track)│

│ + race-class parser │

│ + track-type classifier (Metro/ │

│ Provincial/Country) │

└─────────────┬────────────────────────┘

│ pool DataFrame

▼

┌──────────────────────────────────────┐

│ GRID SEARCH (multiprocessing) │

│ 2.26M combos, 15-core Pool │

│ two-tier: base filter + modifiers │

└─────────────┬────────────────────────┘

│ ranked configs

▼

┌──────────────────────────────────────┐

│ WALK-FORWARD VALIDATION │

│ 9mo train / 3mo test │

│ → reject overfit, keep real edges │

└─────────────┬────────────────────────┘

│

▼

┌──────────────────────────────────────┐

│ EXCEL OUTPUT │

│ 15+ sheets: Overview, Plans, │

│ Volume options, modifier tables │

└──────────────────────────────────────┘

Each stage is a self-contained module with explicit inputs/outputs. The scraper can be skipped entirely if you have your own dataset — the engine reads CSV.

The target site sits behind Cloudflare and uses ASP.NET WebForms with __VIEWSTATE / __EVENTVALIDATION tokens. Eight different scraping approaches were tested before settling on the final two-tier strategy:

| # | Approach | Result |

|---|---|---|

| 1 | requests.get() with browser headers |

403 Forbidden (TLS fingerprint) |

| 2 | requests + persistent cookies |

403 Forbidden |

| 3 | cloudscraper library |

Outdated, fails on modern challenge |

| 4 | httpx with HTTP/2 |

403 Forbidden |

| 5 | Selenium with stealth plugin | Detected as automation |

| 6 | Selenium-undetected-chromedriver | Worked initially, broke after CF update |

| 7 | curl-cffi with Chrome impersonation |

Works for the public API endpoint |

| 8 | Playwright headed Chromium + persistent session | Works for the protected pages |

The key insight: Cloudflare's TLS fingerprinting (JA3) blocks Python's standard ssl module because Python's TLS handshake looks nothing like a real Chrome handshake. curl-cffi solves this by linking against curl-impersonate, which replays the exact TLS extensions, cipher suite ordering, and ALPN preferences that a real Chrome 120+ would send.

For pages where the site additionally inspects browser-side JavaScript challenges, no HTTP-level approach works — only a real browser does. Playwright with headless=False and a persistent session storage file gets through.

Full writeup with code excerpts: docs/anti_bot_bypass.md

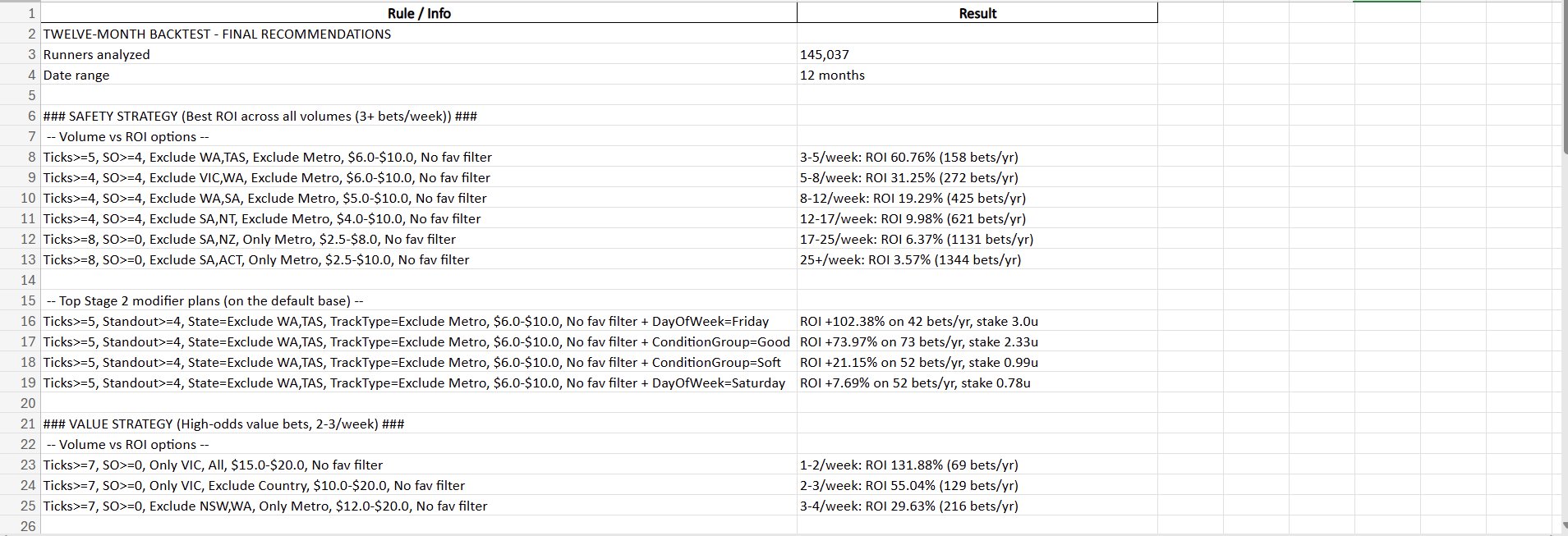

The engine produces a multi-sheet Excel report. Here's what each section looks like (screenshots from a 12-month run on ~145k runners):

A naive grid search over 7 ticks × 7 price-min × 6 price-max × 6 standout × 4 fav-toggle × 36 state-filters × 7 track-type-filters = ~150k combos per strategy, times 2 strategies, times exclusion of invalid combos = ~2.26M evaluated combinations in the real run. Single-process pandas over 210k rows × 2.26M filters would take hours.

The engine uses multiprocessing.Pool with imap_unordered and a chunksize of 50, which on a 16-core box completes the search in 4–7 minutes. The DataFrame is passed to workers via fork (Linux) or pickle (Windows/Mac) — on Windows the DataFrame is shared via Manager to avoid per-worker memory blowup.

Two-tier strategy architecture separates two concerns:

- Stage 1 (base filter) — UI-selectable settings: min ticks, price band, state inclusion/exclusion, track-type filter, favourite toggle. These define the pool of bets the user is willing to consider.

- Stage 2 (modifiers) — additional dimensions (day-of-week, race class group, track condition) that further refine the pool. Each modifier value is scored against the base pool's ROI to compute an edge and a confidence (sample-size-adjusted).

The output is a list of concrete actionable rules: "Base + DayOfWeek=Saturday → ROI +X% over N bets, suggested stake Y units (fractional Kelly capped at 3u)."

Full writeup: docs/grid_search.md

A grid search of 2.26M combinations will find configurations that look profitable on any random dataset — that's the multiple-testing problem. To distinguish real edges from data-mined noise, the engine runs a walk-forward split:

- Train: months 1–9 of the dataset

- Test: months 10–12

The best strategies from the grid search are then re-evaluated separately on train and test. If the test ROI is in the same ballpark as the train ROI, the edge is plausibly real. If the test ROI collapses (or goes negative) while train looks great, the strategy is overfit and is dropped.

On the original real-world run, the top strategies retained positive ROI on out-of-sample data, suggesting the edges generalize beyond the in-sample period.

See src/validation/walk_forward.py and the validation section in docs/architecture.md.

racing-scraper-backtest-engine/

├── README.md # this file

├── LICENSE # MIT

├── requirements.txt

├── run_demo.py # end-to-end demo on synthetic data

├── src/

│ ├── scraper/

│ │ ├── api_scraper.py # Stage 1: curl-cffi + TLS impersonation

│ │ ├── browser_scraper.py # Stage 2: Playwright fallback

│ │ └── parser.py # HTML → structured rows

│ ├── engine/

│ │ ├── grid_search.py # multiprocessing parameter search

│ │ ├── modifiers.py # Stage 2 modifier scoring + Kelly staking

│ │ └── excel_report.py # multi-sheet Excel output

│ ├── validation/

│ │ └── walk_forward.py # train/test split validator

│ └── utils/

│ ├── track_types.py # Metro/Provincial/Country classifier

│ └── race_class.py # race-class parser & grouping

├── data/

│ ├── synthetic/

│ │ └── generate_synthetic.py # creates demo CSVs

│ └── output/ # Excel results land here (gitignored)

├── docs/

│ ├── anti_bot_bypass.md # detailed bypass writeup

│ ├── architecture.md # data flow and design decisions

│ └── grid_search.md # engine internals

└── tests/

├── test_parser.py

├── test_track_classifier.py

├── test_race_class.py

└── test_grid_search.py

- Python 3.10+

pandas,numpy— data manipulationcurl-cffi— Chrome TLS impersonationplaywright— headed browser automationbeautifulsoup4,lxml— HTML parsingopenpyxl— Excel outputpytest— testing

To keep the repo focused on engineering and to avoid glamorizing gambling:

- No betting account integration. The engine's output is an Excel file. What you do with it is your business.

- No "guaranteed strategy" claims. Walk-forward validation is the closest thing to honest evaluation; even strategies that pass it can fail in production due to regime change, market efficiency, or simple bad luck.

- No real scraped data. The synthetic generator produces data with the same schema and similar statistical properties, but no actual races or horses.

MIT. See LICENSE.

This project is for educational and portfolio purposes. The author is not responsible for any losses incurred from following any output of this code.