Agentless, multi-node GPU allocation manager (SSH + nvidia-smi only)

Screenshots are taken from a real environment; sensitive details (node names, usernames, file paths) have been redacted with Nano Banana.

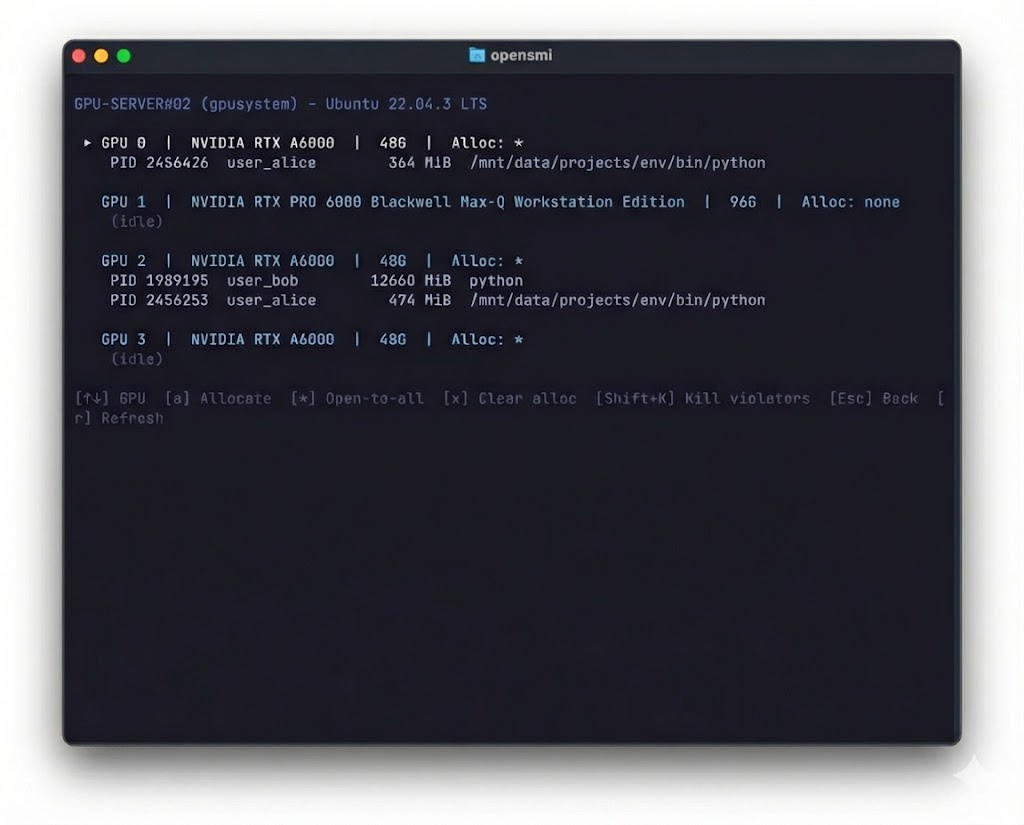

opensmi helps teams monitor and enforce GPU allocations across a self-managed cluster without installing anything on GPU nodes.

It runs from your terminal, connects over SSH, and reads nvidia-smi.

- Interactive TUI — live dashboard, node detail, GPU runner, job tracker

- Multi-cluster tab bar — switch between SSH clusters and Slurm clusters in one view

- Slurm GPU monitoring — read-only per-node GPU usage via Slurm APIs (no SSH to compute nodes)

- CLI — poll, allocate, detect violations, watch, kill, exec

- Policy enforcement — unallocated GPU usage is a violation;

*= open to all - No agents or daemons on GPU nodes

- Python stdlib only — zero pip dependencies for the CLI

Recommended — installs both CLI + TUI:

curl -fsSL https://raw.githubusercontent.com/seilk/opensmi/main/scripts/install.sh | bashBinaries land in ~/.local/bin. The installer auto-detects your shell (zsh/bash/fish) and prints the exact PATH line to add — or offers to add it for you when run interactively.

Requirements: macOS or Linux · Python 3.9+ · SSH access to GPU nodes with nvidia-smi

opensmi updateReplaces the CLI, TUI binary, and wrapper in one step. No uninstall needed.

If you hit GitHub API rate limits: export OPENSMI_GITHUB_TOKEN=<token>

opensmi uninstall # remove CLI + TUI

opensmi uninstall --dry-run # preview what would be removedTo also wipe state and config (irreversible):

opensmi uninstall --purge-state --yes# 1. Create config (interactive wizard)

opensmi onboard

# 2. Verify SSH connectivity + GPU visibility

opensmi poll

# 3. Launch the TUI

opensmiConfig is written to ~/.opensmi/opensmi.json by default.

Override with --config <path> or OPENSMI_CONFIG.

Launch with:

opensmiThe top bar shows: cluster name · user@hostname · GPUs used/total · Violations · Poll time

A tab bar at the very top of the TUI shows all configured clusters. Press Tab / Shift+Tab to cycle, or click a tab directly.

- SSH clusters — defined in

clusters[]in your config, polled via SSH + nvidia-smi - Slurm clusters — defined in

slurm_clusters[], show per-node GPU usage via Slurm APIs (read-only, no SSH to compute nodes)

When a newer version is available, the version label on the right of the tab bar turns yellow: opensmi@0.2.5 → 0.2.6 ↑

Switch tabs with Ctrl+X T to open the tab switcher, then press the shortcut or use arrow keys.

| Shortcut | Tab | Description |

|---|---|---|

d |

Dashboard | Live GPU grid — who's using what, per node |

n |

Node Detail | Per-GPU memory, utilization, process list (enter from Dashboard via Enter) |

g |

My GPUs | Personal GPU view for the current operator |

j |

Jobs | Track queued, running, and finished jobs |

s |

Setup | Per-node env config (conda, venv, work dir) |

h |

Help | Keyboard shortcuts reference |

Note: Node Detail is a hidden tab — navigate to it by selecting a node in the Dashboard and pressing

Enter.

Allocation management (aallocate,xclear,Shift+Kkill) is done directly from the Dashboard, not from a separate tab.

The Command Runner is a persistent pane at the bottom of the screen (not a tab). Focus it with Ctrl+X ↓.

Global shortcuts (work from any tab):

| Key | Action |

|---|---|

Ctrl+X T |

Open tab switcher |

Ctrl+X ↓ |

Focus command runner pane |

Ctrl+X F |

Fold / unfold runner pane |

Ctrl+X Q |

Quit |

The runner pane sits at the bottom of the TUI at all times. Focus it with Ctrl+X ↓, type a command, and execute with Ctrl+X Enter. Press Esc to unfocus.

Execution modes (Tab to toggle):

direct— background process, output capturedtmux— creates a tmux session you can attach to

Distribution modes (Shift+Tab to toggle):

single— one command across multiple GPUs (CUDA_VISIBLE_DEVICES=0,1,2)one-to-one— different command per GPU (e.g., cross-validation folds)

GPU assignment (g to toggle):

auto— ranks GPUs by idleness, last-used time, utilizationmanual— click GPUs in the panel to select

Queue mode (q to toggle):

immediate— runs nowqueued— saves to job queue for auto-dispatch when GPUs free up

Preflight checks run before execution: tmux availability, command syntax, GPU availability.

Tracks all submitted jobs (immediate and queued). From the detail view you can:

- View live output from tmux sessions

- Retry the last command on a session

- Cancel or delete a job record

- Clean up finished tmux sessions

opensmi poll # snapshot cluster GPU state

opensmi violations # list allocation violations (live)

opensmi alloc list # show all allocations

opensmi job list # list jobs

opensmi job list --status running # filter by status

opensmi log # tail opensmi debug logs

opensmi log --follow # live log stream

opensmi --help # full command list

All commands support --json for machine-readable output where applicable.

Admin actions require the operator to be listed in

opensmi.jsonunderadmins.masteroradmins.members, and to have remote sudo-group membership on target nodes.

Allocations define which user is allowed on which GPU. Without an allocation, any GPU usage is a violation.

opensmi alloc list # show all allocations

opensmi alloc set GPU-01 0 alice # assign GPU 0 on GPU-01 to alice

opensmi alloc set GPU-01 1 '*' # open GPU 1 to everyone

opensmi alloc clear GPU-01 0 # remove allocation

opensmi alloc seed # auto-seed from live usage

opensmi alloc seed --force # overwrite existing allocationsSpecial target * means any user is allowed on that GPU.

opensmi violations # one-shot violation check (exit 1 if any)

opensmi watch # poll every 60s, print new violations

opensmi watch --interval 30 # custom poll interval (seconds)

opensmi watch --slack-webhook <url> # send alerts to Slackviolations exits 0 (clean) or 1 (violations found) — suitable for CI/cron.

Send a signal to remote PIDs:

opensmi kill GPU-01 <pid> [<pid> ...]

opensmi kill GPU-01 1234 5678 --signal KILL

opensmi kill GPU-01 1234 --no-sudo # skip sudo, only own processesSupported signals: TERM (default), KILL, INT, HUP.

# Run a command on a node with specific GPUs

opensmi exec GPU-01 --gpus 0,1 --command "python train.py"

# Use tmux mode for long-running jobs

opensmi exec GPU-01 --gpus 0 --command "python train.py" --mode tmux

# Submit to the job queue (auto-dispatches when GPUs free up)

opensmi job submit --auto-gpus 2 --command "python train.py"Per-node environment configuration (conda/venv activation, working directory):

opensmi node-env GPU-01 # show current config

opensmi node-env GPU-01 --env-manager conda --env-name ml # set conda env

opensmi node-env GPU-01 --work-dir ~/projects # set working dir

opensmi node-env GPU-01 --env-manager venv --env-name .venvThis config is used automatically when dispatching jobs to that node.

Verify that your SSH user has the required sudo-group membership on a node:

opensmi sudo-check GPU-01

opensmi sudo-check GPU-01 --jsonAdmin identity and remote sudo-group requirements are set in opensmi.json:

{

"admins": {

"master": "alice",

"members": ["alice", "bob"],

"remote_sudo_groups": ["sudo", "wheel"]

}

}master/members: local usernames allowed to run admin commandsremote_sudo_groups: SSH user must be in one of these groups on the target node foralloc,kill, andexecactions

Config is plain JSON. Start from the template:

opensmi onboard # interactive wizard

opensmi init # write default templateReference template: opensmi.example.json

Keep your real opensmi.json private — it's gitignored by default.

To monitor multiple SSH clusters as separate tabs, use the clusters array:

{

"clusters": [

{

"cluster_name": "Lab-A",

"nodes": [{ "alias": "GPU-01", "address": "10.0.0.1", "user": "ubuntu" }]

},

{

"cluster_name": "Lab-B",

"nodes": [{ "alias": "GPU-05", "address": "10.0.1.1", "user": "admin" }]

}

]

}Single-cluster configs (root-level cluster_name + nodes) continue to work unchanged.

To add a read-only Slurm cluster tab, add slurm_clusters:

{

"slurm_clusters": [

{

"name": "HPC Cluster",

"login_node": "hpc-login",

"user": "myuser"

}

]

}opensmi SSHes into the login node and queries sinfo/squeue/scontrol — no access to compute nodes is required.

Key environment variables:

| Variable | Purpose |

|---|---|

OPENSMI_CONFIG |

Override config path |

OPENSMI_STATE_DIR |

Override state directory (useful for NFS/shared home) |

OPENSMI_PYTHON |

Override Python interpreter |

OPENSMI_GITHUB_TOKEN |

GitHub token to avoid API rate limits during update |

OPENSMI_BIN_DIR |

Override install directory (default: ~/.local/bin) |

OPENSMI_LOG_DIR |

Override log directory |

OPENSMI_LOG_LEVEL |

Log verbosity: DEBUG, INFO (default), WARNING, ERROR |

OPENSMI_REPO |

Override GitHub repo for update (default: seilk/opensmi) |

OPENSMI_TUI_BIN |

Override TUI binary path |

opensmi supports two distinct cluster setups:

Full feature set — allocation, enforcement, job dispatch, kill.

SSH directly into each GPU node; reads nvidia-smi for live GPU state.

Using opensmi's job dispatch (tmux/direct execution) on a cluster already running Slurm is not recommended:

CUDA_VISIBLE_DEVICES: Slurm remaps GPU indices to 0-based; opensmi uses physical indices — they will conflict.- Process lifecycle: opensmi tmux sessions run outside Slurm cgroups, bypassing Slurm's resource accounting.

opensmi can monitor a Slurm cluster as a read-only tab in the TUI — showing per-node GPU assignments, job owners, partition info, and GPU indices via scontrol.

Configure via slurm_clusters in opensmi.json. No access to compute nodes is required.

Local node: If opensmi runs on a GPU node itself, SSH is bypassed automatically — no loopback connection needed.

opensmi can execute remote commands over SSH (including process signals).

Treat the machine you run it on as an admin workstation.

See SECURITY.md.

- Architecture:

docs/ARCHITECTURE.md - Releasing:

docs/RELEASING.md - Changelog:

CHANGELOG.md

MIT — see LICENSE.